Content moderation in Google Workspace will allow IT managers to report abusive speech

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Content moderation in Google Workspace will come to the platform later this year, as part of the new Google Chat infrastructure. The tech giant announced a plethora of new features coming to Google Chat, and among its AI-enhanced capabilities that will make your work more engaging and exciting, Google also plans to punish bad behavior in Google Chat.

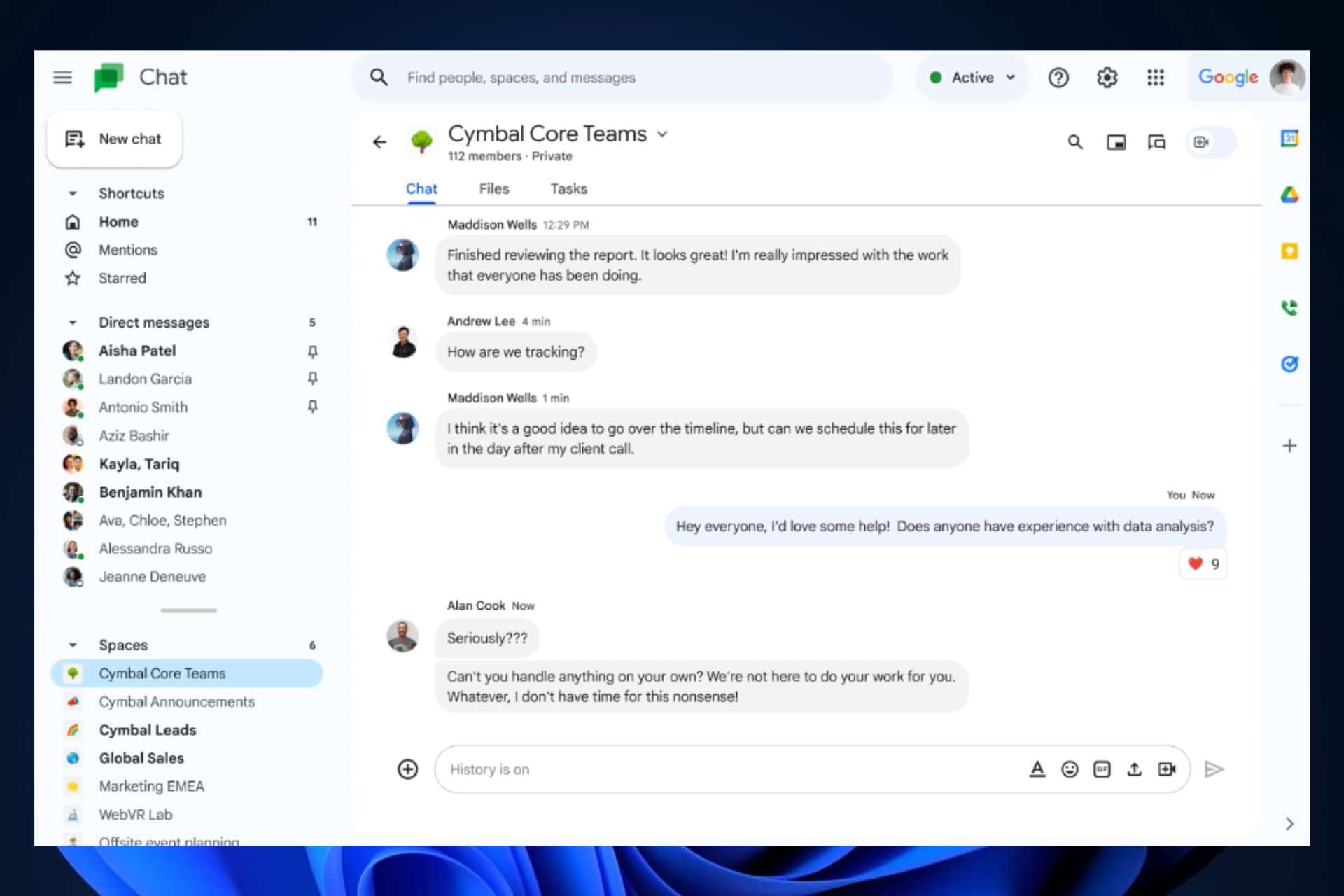

Safer environments don’t only mean 0 risks against phishing, malware, or spam, they also mean empathy, kindness, and respect for your fellow workmates, and Google plans to take action when it comes to harassment and abuse in Google Chat.

To help admins better manage privacy and security in Chat, while helping communities to thrive in their organizations, by the end of this year we’re rolling out content moderation in the admin console, which gives administrators a centralized tool for incident review.

Content moderation in Google Workspace will be very easy to use. If you’re an IT admin, you can easily report any sort of message that displays abuse or harassment towards someone. Plus, as Google said, admins will have a centralized tool for moderating content in Google Workspace. This means you won’t have to go from one tab to the other, as moderating content will be possible on a single panel.

Needless to say, Google Workspace will get a lot of very useful features that will help and improve collaboration all around. These include:

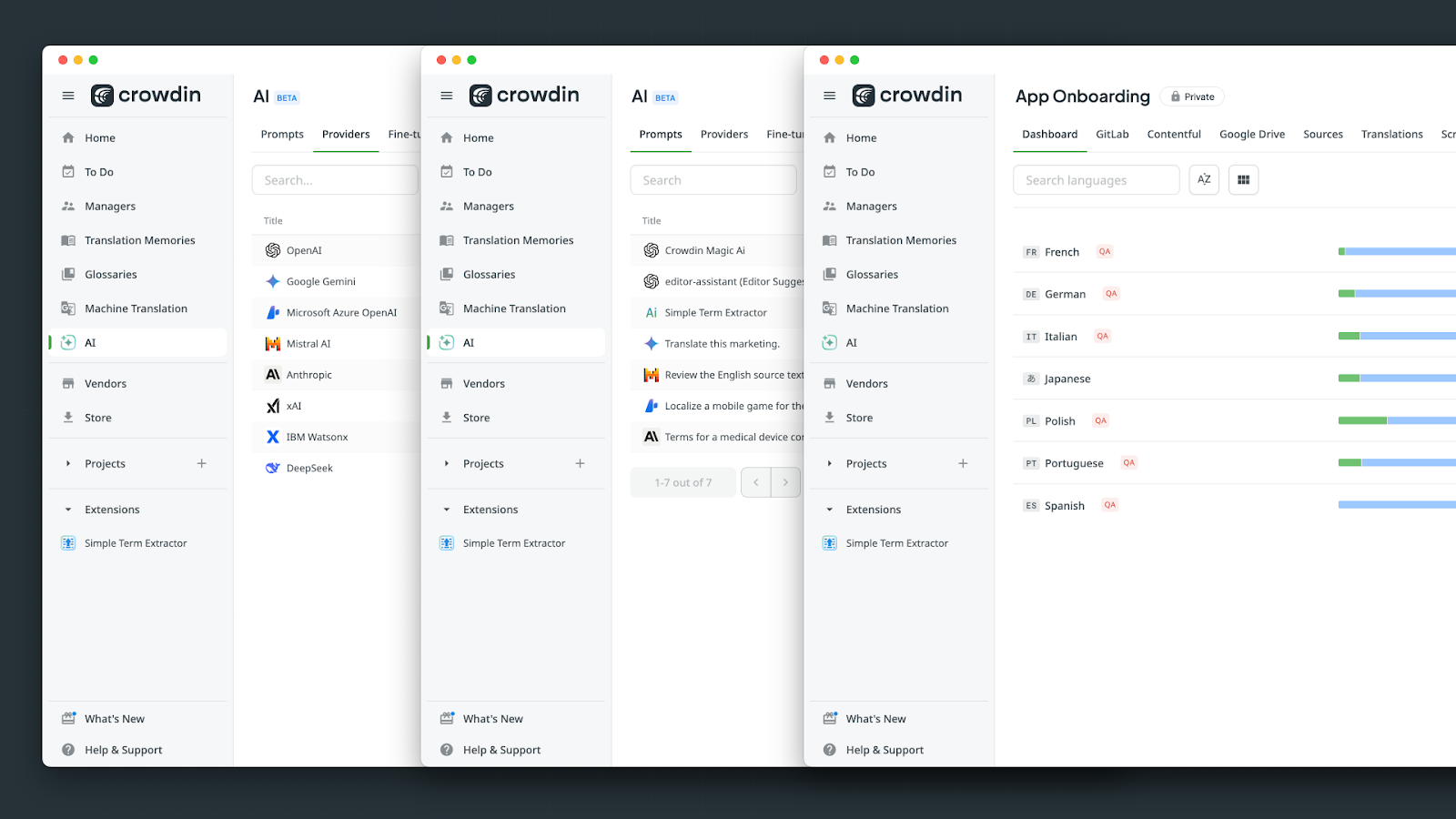

- a dedicated section for apps in the conversation list

- an updated Google Drive app that lets you respond to comments and sharing requests

- third-party apps for Google Chat including Workday, Loom, Zoho, and LumApps, which join our already deep bench of supported apps, including Asana, Salesforce, Zendesk, PagerDuty, and Jira

- the ability to create no-code, custom apps right in Chat, thanks to Duet AI in AppSheet

Are you excited about the new Google Workspace?

User forum

0 messages