Microsoft's new AI tool makes your imagination reality

3 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Imagine being able to generate high-quality photos just be describing them to a computer. This sci-fi scenario is now a reality, thanks to Microsoft’s new AI tool.

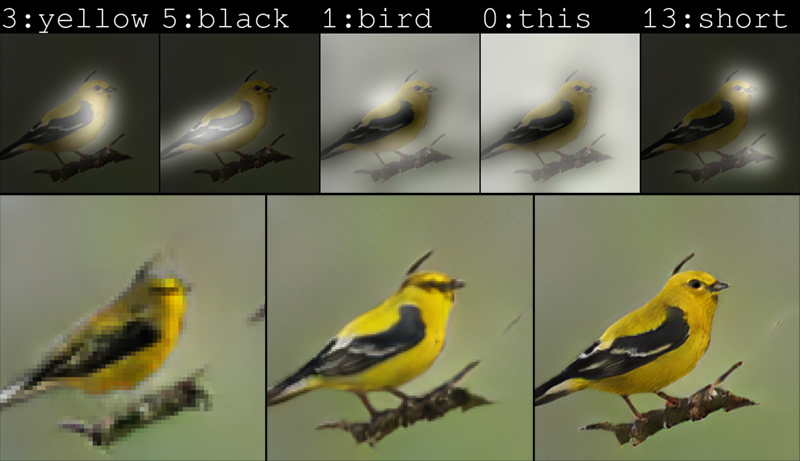

Drawing Bot created the above image simply from the description of “a bird with a yellow body, black wings and a short beak,” using a new technique where the AI pays close attention to individual words when generating images from caption-like text descriptions, resulting in a 3-fold boost in image quality compared to other text-to-image generation techniques.

The bot can do more than just birds, being able to draw everything from ordinary pastoral scenes, such as grazing livestock, to the absurd, such as a floating double-decker bus.

“If you go to Bing and you search for a bird, you get a bird picture. But here, the pictures are created by the computer, pixel by pixel, from scratch,” said Xiaodong He, a principal researcher and research manager in the Deep Learning Technology Center at Microsoft’s research lab in Redmond, Washington. “These birds may not exist in the real world — they are just an aspect of our computer’s imagination of birds.”

The team started with CaptionBot, which automatically wrote captions for images (used on Facebook, for example, to tag images for accessibility purposes), then SeeingAI, which let visually impaired users use their phone camera to have scenes described to them, and now finally Drawing Bot.

“Now we want to use the text to generate the image,” said Qiuyuan Huang, a postdoctoral researcher in He’s group and a paper co-author. “So, it is a cycle.”

The feat is an example of a Generative Adversarial Network, or GAN, where one AI network, the generator attempts to get fake pictures past another AI network, the discriminator. Working together, the discriminator pushes the generator toward perfection.

The new technique improves on the state of the art by concentrating on the different parts of the sentence in turn, e.g. first drawing a yellow bird, then the black wings and then the short beak.

“Attention is a human concept; we use math to make attention computational,” explained He.

“We can control what we describe and see how the machine reacts,” explained He. “We can poke and test what the machine learned. The machine has some background learned commonsense, but it can still follow what you ask and maybe, sometimes, it seems a bit ridiculous.”

Text-to-image generation technology could find practical applications acting as a sort of sketch assistant to painters and interior designers, or as a tool for voice-activated photo refinement. With more computing power, He imagines the technology could generate animated films based on screenplays, augmenting the work that animated filmmakers do by removing some of the manual labor involved.

“For AI and humans to live in the same world, they have to have a way to interact with each other,” explained He. “And language and vision are the two most important modalities for humans and machines to interact with each other.”

The full paper describing the research can be found on arXiv.org.

via Microsoft.com

User forum

0 messages