Microsoft Research's Fusion4D project can enable high fidelity immersive telepresence

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

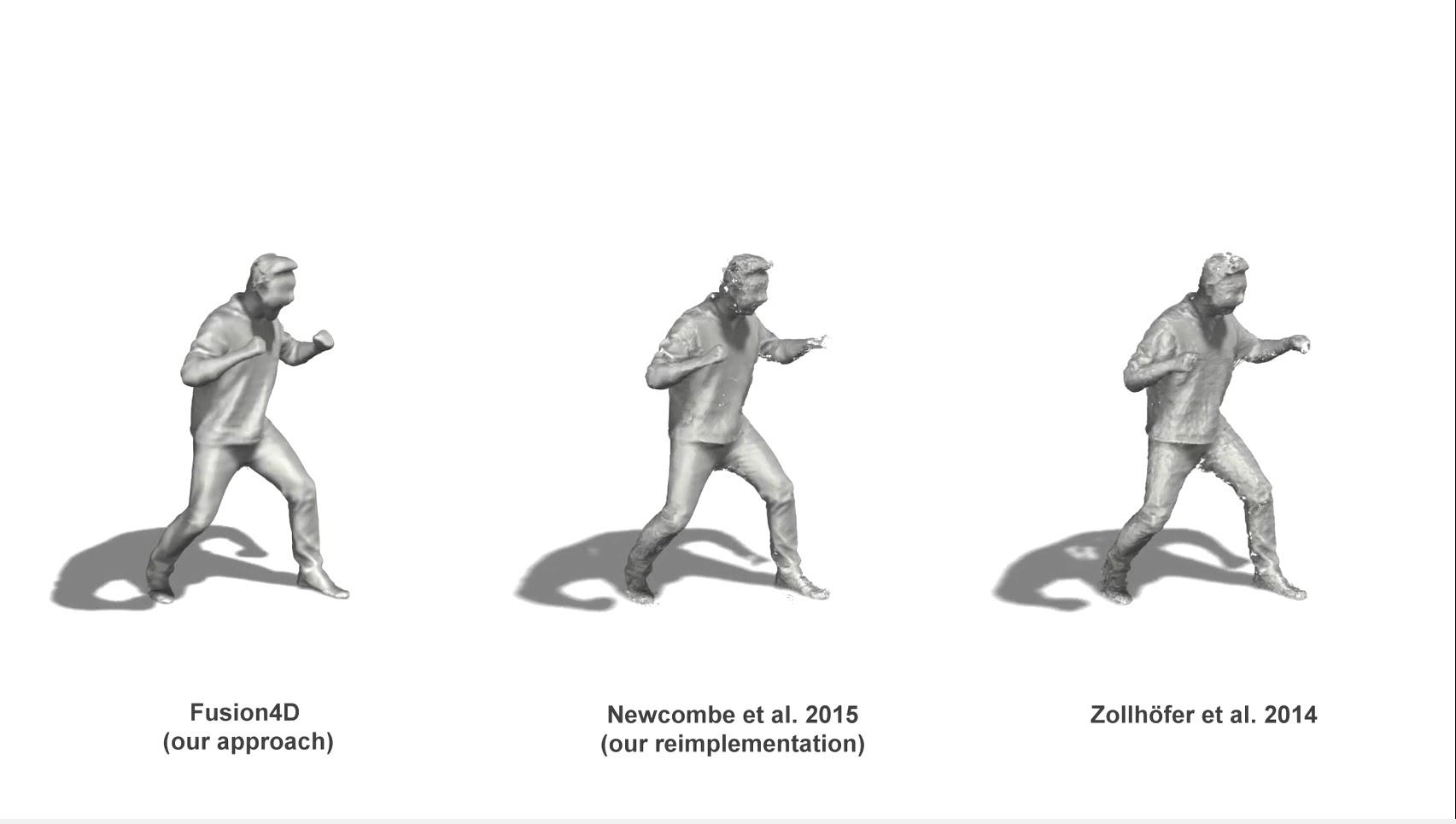

Microsoft Research recently revealed details about its latest project called Fusion4D which is a new pipeline for live multi-view performance capture, generating temporally coherent high-quality reconstructions in real-time. Their algorithm supports both incremental reconstruction, improving the surface estimation over time, as well as parameterizing the nonrigid scene motion.

Our approach is highly robust to both large frame-to-frame motion and topology changes, allowing us to reconstruct extremely challenging scenes. We demonstrate advantages over related real-time techniques that either deform an online generated template or continually fuse depth data nonrigidly into a single reference model. Finally, we show geometric reconstruction results on par with offline methods which require orders of magnitude more processing time and many more RGBD cameras.

MSR believes that this work can enable new types of live performance capture experiences, such as broadcasting live events including sports and concerts in 3D, and also the ability to capture humans live and have them re-rendered in other geographic locations to enable high fidelity immersive telepresence. Learn more about this project from Microsoft Research.

It is also important to note that most of the researchers who worked on this project at Microsoft Research has left the company to start a new company named PerceptiveIO. Find the list of researchers who contributed to this project below. Except for Yury Degtyarev and Pushmeet Kohli, every other person is now in PerceptiveIO.

Mingsong Dou (2), Sameh Khamis (2), Yury Degtyarev (1), Philip Davidson (2) Sean Ryan Fanello (2), Adarsh Kowdle (2), Sergio Orts Escolano (2), Christoph Rhemann (2), David Kim (2), Jonathan Taylor (2), Pushmeet Kohli (1), Vladimir Tankovich (2), Shahram Izadi (2)

(1) Microsoft Research (2) perceptiveIO

User forum

6 messages