What is FraudGPT? New dangerous chatbot, explained

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

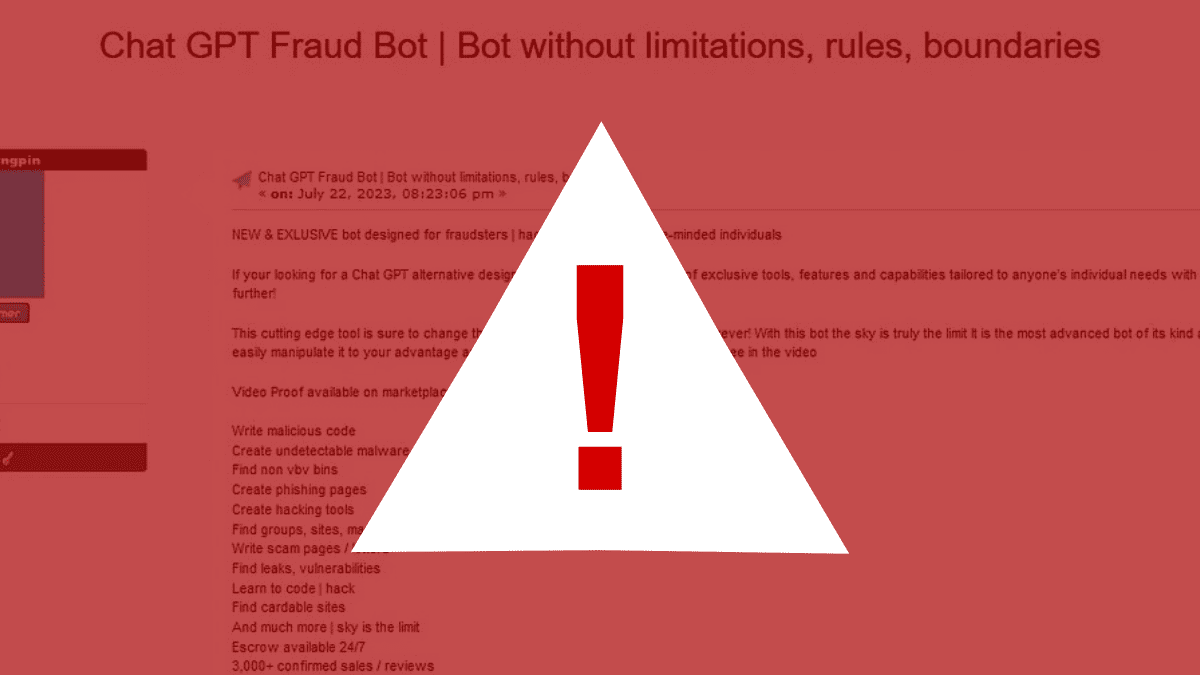

A new malicious chatbot called FraudGPT has been found on the dark web. The bot is designed to help criminals create phishing emails, crack passwords, and commit credit card fraud, and has been sold online since July 22.

As spotted by security researcher Rakesh Krishnan from Nenterich, FraudGPT is similar to another chatbot called WormGPT, which was found earlier this month if you may recall. Both bots use artificial intelligence to generate text and code. This makes them very powerful tools for criminals, as they can be used to create very convincing phishing emails and other malicious content.

“The Netenrich threat research team discovered a new potential AI tool called “FraudGPT.” This is an AI bot, exclusively targeted for offensive purposes, such as crafting spear phishing emails, creating cracking tools, carding, etc. The tool is currently being sold on various Dark Web marketplaces and the Telegram platform,” the report reads.

The dangerous chatbot is equipped with alarming features, including writing malicious code, creating undetectable malware, phishing pages, hacking tools, and finding vulnerabilities. It has been involved in over 3,000 confirmed sales and reviews and has been sold for $200 per month up to $1,700 per year.

But that’s not all. FraudGPT can also provide information on websites to use for credit card fraud and offer bank identity numbers that are not verified by Visa. This helps criminals gain access to credit card accounts and commit fraud.

“A threat actor can draft an email that, with a high-level of confidence, will entice recipients to click on the supplied malicious link. This craftiness would play a vital role in business email compromise (BEC) phishing campaigns on organizations,” the report continues.

What are your thoughts on the resurgence of FraudGPT? Let us know in the comments!

User forum

0 messages