Microsoft Research develops AI-based fix for blurred pictures due to Under-Display cameras

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

After the success of the punch-hole front-facing camera the next major development for selfie cameras is fully under-display cameras, where the cameras are behind a transparent OLED screen which would still work as a normal display when not active, but which would allow enough light through to allow the camera underneath to take a usable picture.

It is the last aspect which has been a problem, and which Microsoft Research has been investigating.

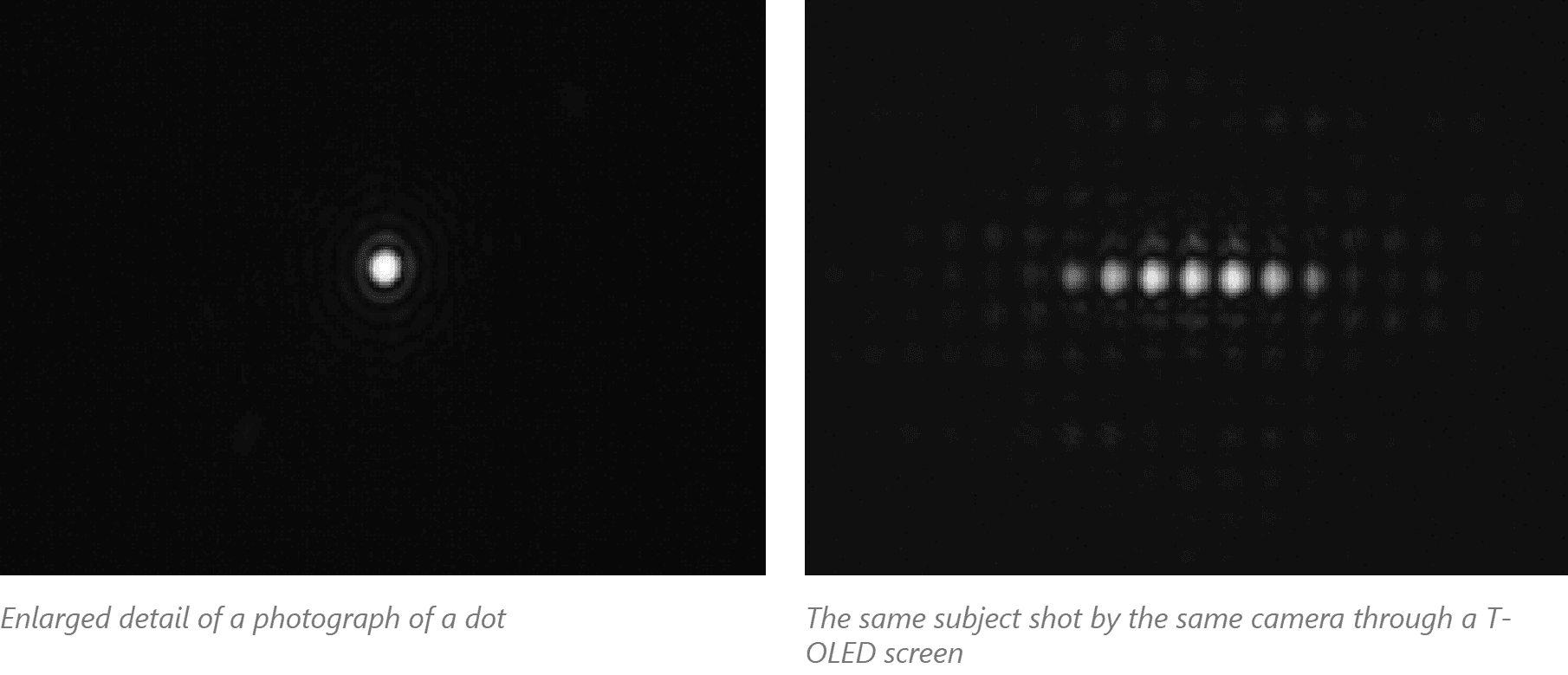

They note that diffraction from the screen’s pixel structure can blur the image, reduce contrast, reduce usable light levels, and even obstruct some image content entirely, in ways that are dependent on the device’s display-pixel design.

Fortunately, the degradation happens in a predictable fashion and due to to the pixel structure generally only in the horizontal direction.

To compensate for the image degradation inherent in photographing through a T-OLED screen, the researchers used a U-Net neural-network structure that both improves the signal-to-noise ratio and de-blurs the image. The team was able to achieve a recovered image that is virtually indistinguishable from an image that was photographed directly.

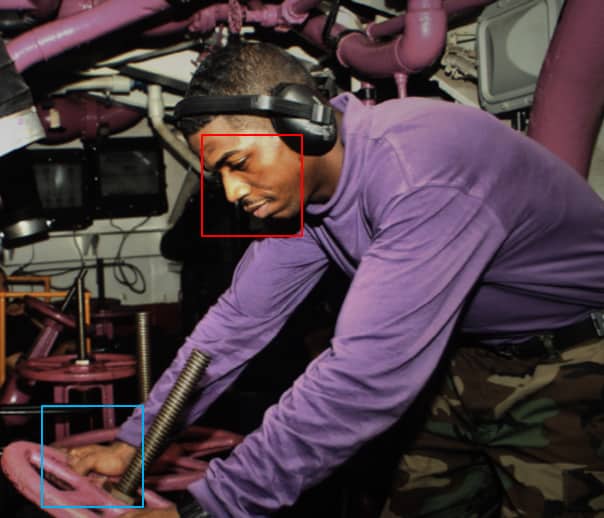

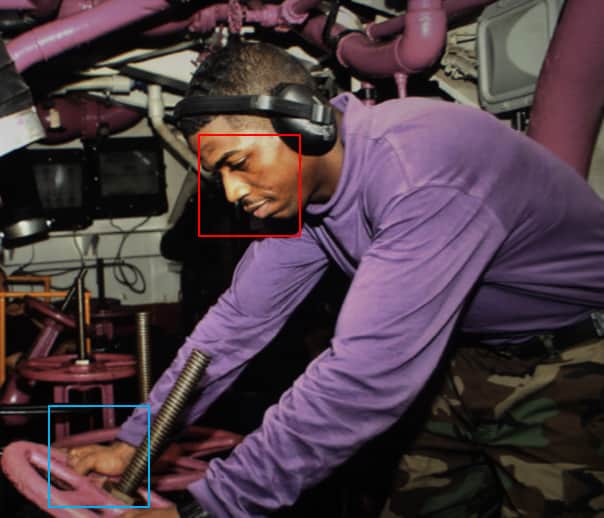

The improvement can be seen in the sample image below:

Original, photographed normally:

Photographed through a T-OLED screen:

Involving AI in picture capture also allows other interesting techniques such as blurring or replacing the background and other video manipulation techniques which allows for better and more natural video calling.

Microsoft appears to be developing the work primarily for use in larger screens in video conferencing settings, but I am sure it would apply equally to your next flagship smartphone.

Read more about the project at Microsoft here.

via WalkingCat

User forum

0 messages