Microsoft patents new gesture unlock which will only work for you

5 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Unlocking your device using gestures is not new at all with Apple’s simple Swipe to Unlock and the Pattern Unlock common on Android handsets.

They however do not bring much security, especially when the pattern is clearly traced out on a greasy screen.

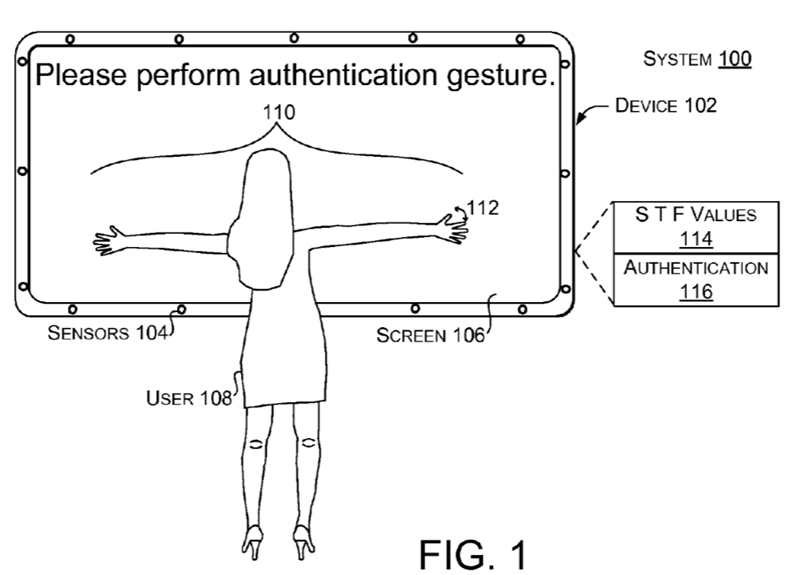

Microsoft has now patented a new technique which captures biometric information such as finger position, finger length, angle between fingers and more to provide authentication information with a simple gesture and to make sure it is actually you making the unlock request.

In the patent Microsoft writes:

[0011] Today mobile users authenticate on their mobile devices (i.e., phones/tablets) through simple four-digit passcodes or gestures. Even though this process makes it easy for users to unlock their devices, it does not preserve the security of their devices. For instance, a person simply observing a user unlocking his/her phone (e.g., over the shoulder attack) can easily figure out the four-digit passcode or the gesture used to unlock the device. As a result, an authentication technique that prevents such over the shoulder attacks is desirable. Such a technique should be easy for the user to perform on the device, but hard for other users to replicate even after seeing the actual user performing it. Other devices employ a dedicated fingerprint reader for authentication. The fingerprint reader can add considerably to the overall cost of the device and require dedicated real-estate on the device. The present concepts can gather information about the user when the user interacts with the device, such as when the user performs an authentication gesture relative to the device. The information can be collectively analyzed to identify the user at a high rate of reliability. The concepts can use many types of sensor data and can be accomplished utilizing existing sensors and/or with additional sensors.

[0012] Some mobile device implementations are introduced here briefly to aid the reader. Some configurations of the present implementations can enable user-authentication based solely on generic sensor data. An underlying principal of this approach is that different users perform the same gesture differently depending on the way they interact with the mobile device, and on their hand’s geometry, size, and flexibility. These subtle differences can be picked up by the device’s embedded sensors (i.e., touch, accelerometer, and gyro), enabling user-authentication based on sensor fingerprints. Several authentication gesture examples are discussed that provide relatively high amounts of unique user information that can be extracted through the device’s embedded sensors.

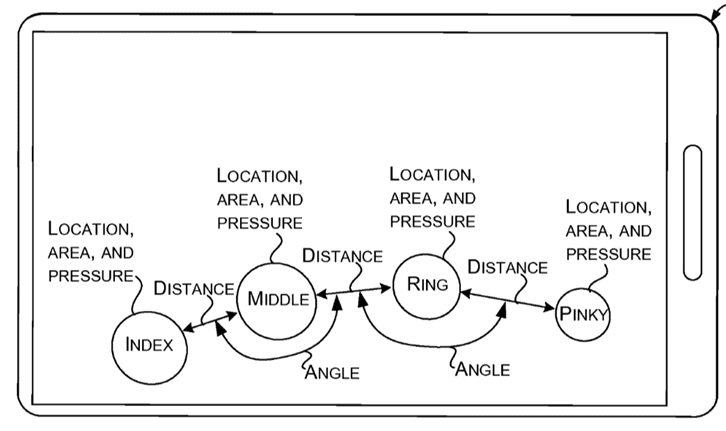

[0013] While the user performs the authentication gesture, these implementations can leverage the touch screen sensor to extract rich information about the geometry and the size of the user’s hand. In particular, the information can relate to the distance and angle between fingers, the precise timing that each finger touches and leaves the touch screen, as well as the size and pressure applied by each finger. At the same time, these implementations can leverage the embedded accelerometer and gyro sensors to record the mobile device’s displacement and rotation during the gesture. Every time a finger taps the screen, the mobile device is slightly displaced depending on how the user taps and holds the mobile device at the time of the gesture.

[0014] The present implementations can utilize multiple biometric features (e.g., parameters) that are utilized as the user interacts with the device to identify the user. User interaction with the device tends to entail movement of the user (and/or the device). In some of the present implementations, the movement can relate to an authentication gesture (e.g., log-in gesture). During a training session, the user may repeatedly perform the authentication gesture. Values of multiple different biometric features can be detected from the training authentication gestures during the training period. A personalized similarity threshold value can be determined for the user from the values of the training authentication gestures. Briefly, the personal similarity threshold can reflect how consistent (or inconsistent) the user is in performing the authentication gesture. Stated another way, the personalized similarity threshold can reflect how much variation the user has in performing the authentication gesture.

[0015] Subsequently, a person can perform the authentication gesture to log onto the device (or otherwise be authenticated by the device). Biometric features values can be detected from the authentication gesture during log-in and compared to those from the training session. A similarity of the values between the log-in authentication gesture and the training session can be determined. If the similarity satisfies the personalized similarity threshold, the person attempting to log-in is very likely the user. If the similarity does not satisfy the personalized similarity threshold, the person is likely an imposter.

[0016] From one perspective, the user-authentication process offered by the present implementations can be easy and fast for users to perform, and at the same time difficult for an attacker to accurately reproduce even by directly observing the user authenticating on the device.

Interestingly the idea would work at a wide variety of screen sizes, including all the way up to the Xbox One with Kinect.

The idea seems rather beautiful in its simplicity, and we hope we will eventually see it show up in real devices.

The full patent can be seen here.

Thanks DH Dog for the tip.

User forum

0 messages