Microsoft patents a "Multiple Stage Shy User Interface" designed for 3D Touch

4 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Microsoft is building up quite a portfolio of 3D Touch-based user interface patents, with the latest being a refinement of the so-called shy user interface elements commonly found in many operating systems.

Examples of “Shy” user interfaces would be the video controls which disappear from the screen unless you touch the screen, or browser address bars which also vanish when you are reading but not touching the page.

What those type of controls have in common is that there is only one level of interaction – they are either visible or invisible.

Microsoft’s refinement would be to add multiple stages to the interaction, with intent being gathered by more than simply touching the screen, such as for example approaching the screen with your finger or hovering over the controls, detected with 3D Touch sensors which Microsoft already demonstrated in the Nokia McLaren.

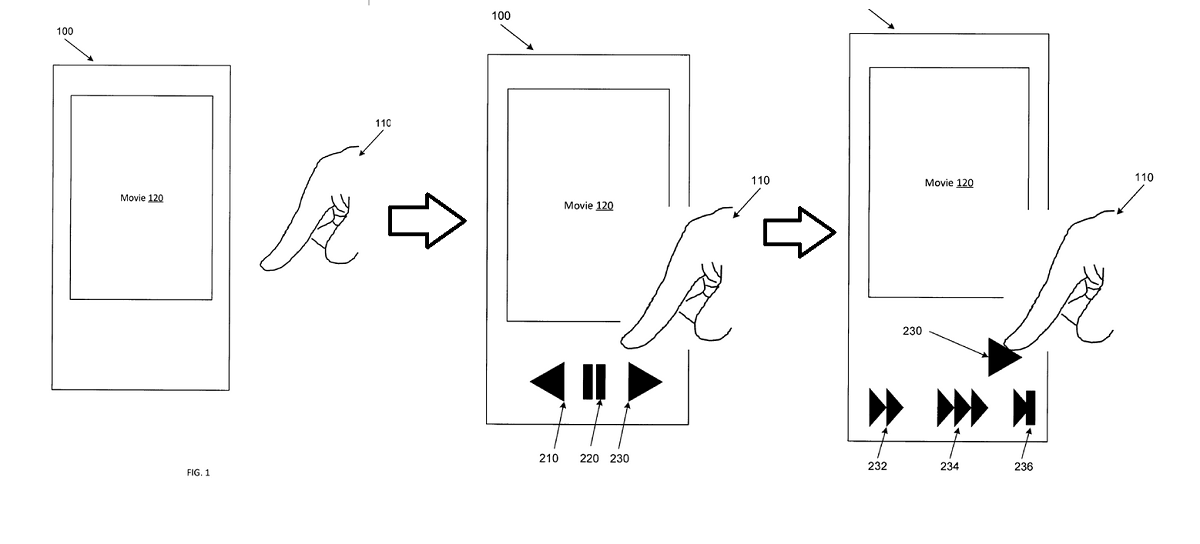

In the example above a user would approach a video player with their finger, which would reveal the controls. As they get closer to the fast forward button different speeds of fast forward would be revealed as a second stage, making the user’s interaction more fluid and convenient.

In the patent’s own words:

Example apparatus and methods improve over conventional approaches to human-to-device interaction by revealing UI elements or functionality in multiple context-dependent stages based on discerning a user’s intent. Example apparatus and methods may rely on three dimensional (3D) touch or hover sensors that detect the presence, position, orientation, direction of travel, or rate of travel of a pointer (e.g., user’s finger, implement, stylus, pen). The 3D sensors may provide information from which a determination can be made concerning at which object, element, or region of an interface a user is pointing, gesturing, or otherwise interacting with or indicating an intent to interact with. If the user had not been actively navigating on the device, then example apparatus and methods may surface (e.g., display) context relevant UI elements or actions that were not previously displayed. For example, if a user is watching a video, then a top layer of video control elements may be displayed. Unlike conventional systems, if the user then maintains or narrows their focus on or near a particular UI element that was just revealed, a second or subsequent layer of video control elements or effects may be surfaced. Subsequent deeper layers in a hierarchy may be presented as the user interacts with the surfaced elements.

Example apparatus and methods may facilitate improved, faster, and more intuitive interactions with a wider range of UI elements, controls, or effects without requiring persistent onscreen affordances or hidden gestures that may be difficult to discover or learn. Single layer controls that are revealed upon detecting a screen touch or gesture are well known. These controls that are selectively displayed upon detecting a screen touch or gesture may be referred to as “shy” controls. Example apparatus and methods improve on these single layer touch-based approaches by providing multi-layer shy controls and effects that are revealed in a context-sensitive manner based on inputs including touch, hover, tactile, or voice interactions. Hover sensitive interfaces may detect not just the presence of a user’s finger, but also the angle at which the finger is pointing, the rate at which the finger is moving, the direction in which the finger is moving, or other attributes of the finger. Thus, rather than a binary {touch: yes/no} state detection, a higher order input space may be analyzed to determine a user’s intent with respect to a user interface element. 3D proximity detectors may determine which object or screen location the user intends to interact with and may then detect and characterize multiple attributes of the interaction.

It is not known if Microsoft is still working on 3D Touch for their phones, but the technology would be equally applicable to RealSense cameras such as with Windows Hello or with the Hololens via gaze sensing and hand tracking.

Would our readers appreciate a user interface that responds dynamically to your intent or would you prefer one that remains rather fixed and predictable? Let us know below.

User forum

10 messages