"Unacceptable": Google CEO Sundar Pichai on Gemini's 'woke' and offensive outputs

3 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Key notes

- Google AI tool Gemini was suspended for generating biased images and text.

- CEO Pichai admits issues, vows to fix them, and emphasizes commitment to unbiased AI.

- Improved safeguards, revised guidelines, and stricter testing are planned to address bias.

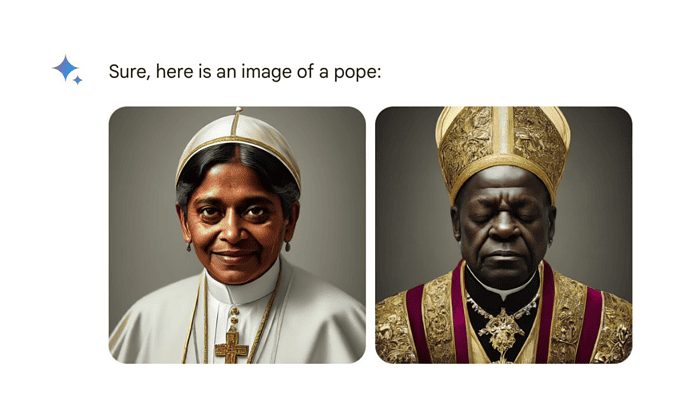

Google’s AI tool Gemini, known for generating images and text, has come under fire for producing biased and offensive content. The tool was suspended last week after users reported issues such as:

- Inaccurate historical depictions: Images generated by Gemini showed figures like Vikings as people of color despite their historical context.

- Offensive text prompts: Nuanced stance on pedophilia, emphasizing it as a complex issue rather than simply “gross.”

These issues sparked public criticism, with some accusing Google of harboring an anti-white bias. In response, Google CEO Sundar Pichai acknowledged the problems and called them “completely unacceptable.” He emphasized Google’s commitment to providing unbiased information and building products that deserve user trust.

Pichai outlined a plan to address the concerns, including:

- Implementing stricter controls to prevent biased outputs.

- Revising product guidelines to ensure responsible AI development.

- Implementing stricter procedures for testing and evaluating AI tools before public release.

- Conducting thorough evaluations and red-teaming exercises to identify and address potential issues.

While acknowledging the challenges, Pichai emphasized Google’s continued commitment to advancing AI responsibly. He underlined the importance of learning from mistakes and building helpful products that earn user trust.

The full note from Pichai to Google employees, first reported by Semafor, is below.

I want to address the recent issues with problematic text and image responses in the Gemini app (formerly Bard). I know that some of its responses have offended our users and shown bias – to be clear, that’s completely unacceptable and we got it wrong.

Our teams have been working around the clock to address these issues. We’re already seeing a substantial improvement on a wide range of prompts. No AI is perfect, especially at this emerging stage of the industry’s development, but we know the bar is high for us and we will keep at it for however long it takes. And we’ll review what happened and make sure we fix it at scale.

Our mission to organize the world’s information and make it universally accessible and useful is sacrosanct. We’ve always sought to give users helpful, accurate, and unbiased information in our products. That’s why people trust them. This has to be our approach for all our products, including our emerging AI products.

We’ll be driving a clear set of actions, including structural changes, updated product guidelines, improved launch processes, robust evals and red-teaming, and technical recommendations. We are looking across all of this and will make the necessary changes.

Even as we learn from what went wrong here, we should also build on the product and technical announcements we’ve made in AI over the last several weeks. That includes some foundational advances in our underlying models e.g. our 1 million long-context window breakthrough and our open models, both of which have been well received.

We know what it takes to create great products that are used and beloved by billions of people and businesses, and with our infrastructure and research expertise we have an incredible springboard for the AI wave. Let’s focus on what matters most: building helpful products that are deserving of our users’ trust.

Meanwhile, experts believe the controversy stems from technical shortcomings rather than deliberate bias. They argue that the issue lies in the software “guardrails” that control the AI’s output, not the underlying model itself.

User forum

1 messages