Instead of 5 cameras HoloLens 2.0 may just have one

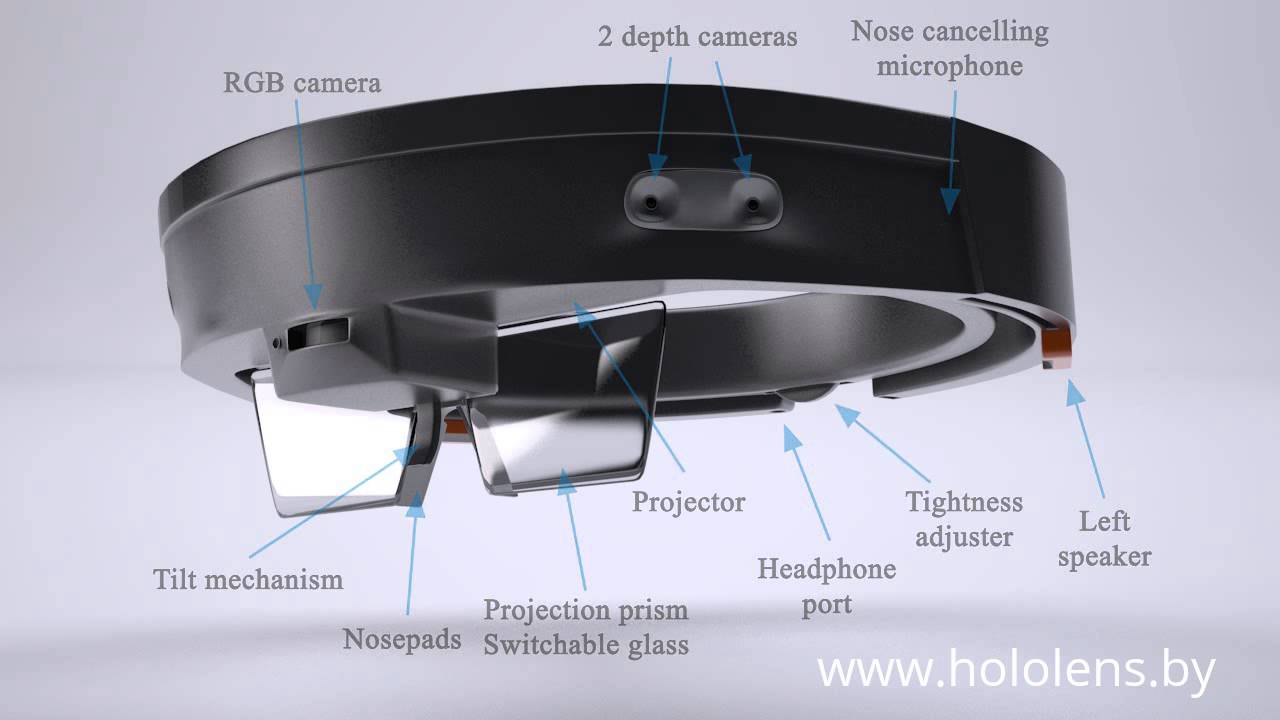

The current generation HoloLens (above) actually has 5 cameras – two on each side for depth sensing using infra-red dot emitters, much like the Kinect, and a centre RGB camera which uses regular ambient light for Simultaneous Localization and Mapping (SLAM), similar to how the cameras in Windows Mixed Reality headsets are able to calculate your relative motion and position based on the scenery in your environment.

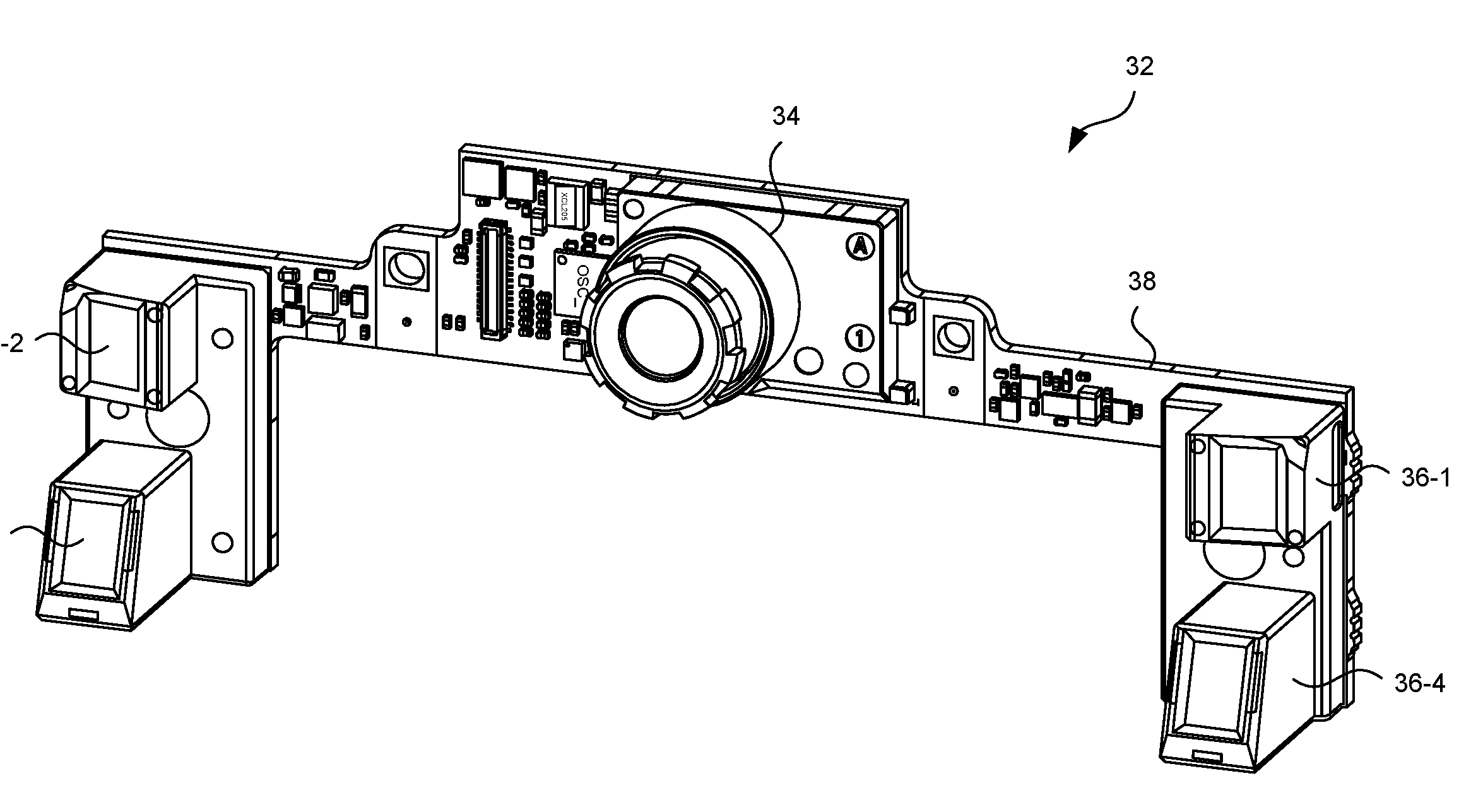

In a new patent applied for in March 2017 and published today, Microsoft describes a new multi-spectral camera which may replace the 5 cameras with just one.

The abstract for the application, MULTI-SPECTRUM ILLUMINATION-AND-SENSOR MODULE FOR HEAD TRACKING, GESTURE RECOGNITION AND SPATIAL MAPPING, reads:

A device and method use multiple light emitters with a single, multi-spectrum imaging sensor to perform multi-modal infrared light based depth sensing and visible light based Simultaneous Localization and Mapping (SLAM). The multi-modal infrared based depth sensing may include, for example, any combination of infrared-based spatial mapping, infrared based hand tracking and/or infrared based semantic labeling. The visible light based SLAM may include head tracking, for example.

Microsoft further explains the key innovation, the multi-spectrum imaging sensor, as such:

Figure 4 schematically illustrates a multi-spectrum sensor for detecting both visible light and IR light. The multi-spectrum sensor 400 includes two different types of sensor pixels: visible light pixels and IR light pixels. The visible light pixels (each denoted by a “V” in Figure 4) are sensitive to broadband visible light (e.g., from 400 nm to 650 nm) and have limited sensitive to IR light. The IR light pixels (each denoted by an “IR” in Figure 4) are sensitive to IR light and have limited sensitivity to optical crosstalk from the visible light. In the illustrated embodiment the IR pixels and the visible light pixels are interspersed on the sensor 400 in a checkerboard-like (i.e., two-dimensionally alternating) fashion. The multi-spectrum 400 sensor can be coupled to an IR bandpass filter (not shown in Figure 4) to minimize the amount of ambient IR light that is incident on the IR light pixels. In some embodiments the bandpass filter passes visible light with wavelengths in the range of 400-650 nm and IR narrowband (e.g., wavelength span less than 30 nm).

In some embodiments, the visible light pixels of the multi-spectrum sensor 400 collectively serve as a passive imaging sensor to collect visible light and record grayscale visible light images. The HMD device can use the visible light images for a SLAM purpose, such as head tracking. The IR light pixels of the multi-spectrum sensor 400 collectively serve as a depth camera sensor to collect IR light and record IR images (also referred to as monochrome IR images) for depth sensing, such as for hand tracking, spatial mapping and/or semantic labeling of objects.

Reducing the part count of the next HoloLens, of course, has the potential of reducing the cost, size, complexity and power consumption of the device, which may mean a smaller and cheaper HoloLens 2, which is of course exactly what Microsoft needs to remain competitive.

The next HoloLens is expected to have an improved Holographic Processing Unit with more AI capabilities, and an improved Kinect-like depth camera. Microsoft’s main challenge with the new Hololens is to improve the field of view, which at 35 degrees has been described as looking at the world through a mail slot. Microsoft is reportedly bringing development of the lenses internally to achieve this at a reasonable cost.

HoloLens 2 will reportedly be powered by the recently announced Qualcomm Snapdragon XR1 processor, which has been designed with the express purpose of delivering a “high quality” VR and AR experience. The device will presumably run the ARM version of Windows 10 with Microsoft’s Mixed Reality UI.

With the next generation of the headset expected within the next 6 months hopefully, we will see many of these patented innovations showing up in the actual device.

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

User forum

0 messages