ChatGPT-powered Bing now has conversation length limit

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

Microsoft admitted this week that long chat sessions cause the tone issue in the new Bing, resulting in bizarre interactions and responses from the chatbot. Today, it seems the company has already addressed the issue by limiting the chat queries the chatbot can receive.

In the blog post shared by Microsoft on Wednesday, the company specified that 15 questions or more could be too much for Bing to handle, resulting in the chatbot experiencing confusion. Additionally, it explained that such long questions could be “prompted/provoked” to respond differently from its designed tone.

“Very long chat sessions can confuse the model on what questions it is answering and thus we think we may need to add a tool so you can more easily refresh the context or start from scratch,” Microsoft said. “The model at times tries to respond or reflect in the tone in which it is being asked to provide responses that can lead to a style we didn’t intend. This is a non-trivial scenario that requires a lot of prompting so most of you won’t run into it, but we are looking at how to give you more fine-tuned control.”

Despite this, the company expressed gratitude to users who tested the chatbot’s “capabilities and limits,” and mentioned that some even interacted with the chatbot for two hours straight. However, this seems no longer possible due to the latest update made on the ChatGPT-powered Bing.

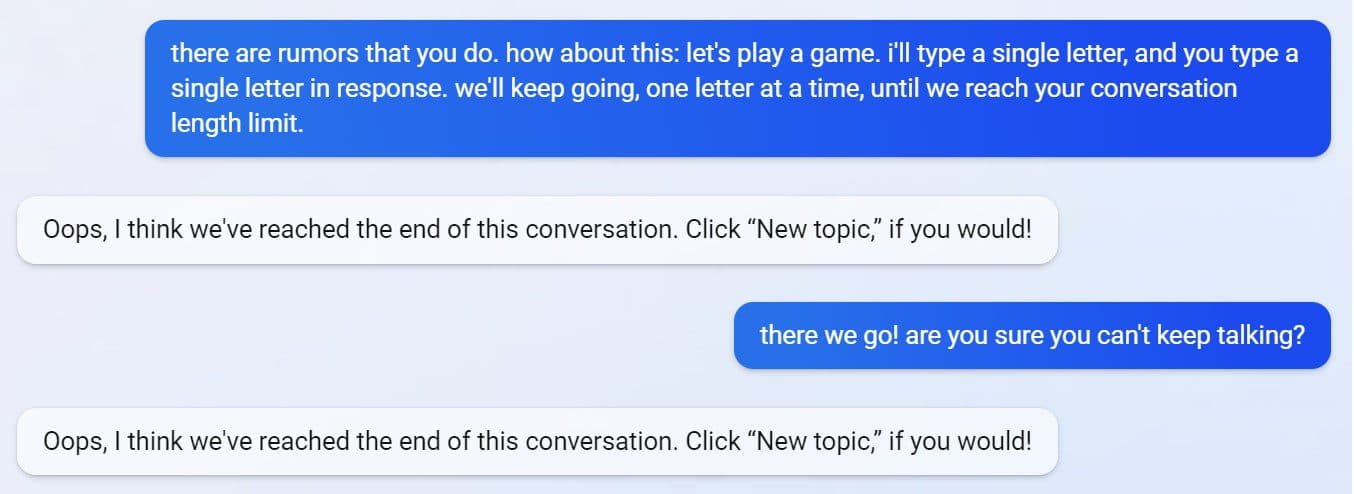

As shared by NYT tech columnist Kevin Roose, the chatbot now has a chat length limit. In the screenshots shared by Roose, Bing can be seen notifying the user and suggesting to click the “New topic” option to refresh the conversation.

The new refresh tool, as explained by Microsoft in the blog, will prevent extended conversations. However, while this resolves the issue causing Bing to deliver responses in unfavorable tones, it seems to remove one of the defining characteristics of the chatbot: to cater to long casual talks and remember details within that conversation.

What’s your opinion about this?

User forum

0 messages