Apple asks to be trusted, turns out many people don't

3 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

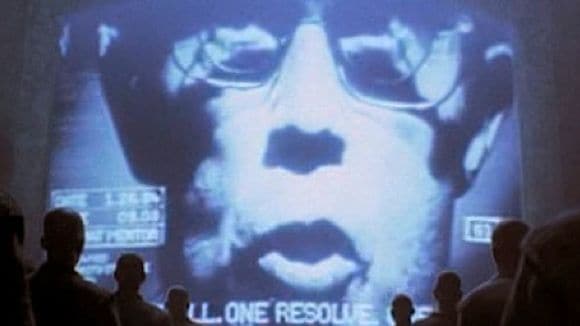

Apple has continued to firefight the backlash regarding the scanning of iPhone user’s phones for child sexual abuse material, mainly by painting those who are alarmed by the move as confused, with Apple’s Craig Federighi telling the Wall Street Journal:

“It’s really clear a lot of messages got jumbled pretty badly in terms of how things were understood. We wish that this would’ve come out a little more clearly for everyone because we feel very positive and strongly about what we’re doing.”

Many critics, such as the EFF, do of course understand what Apple is doing, and have grave reservations about Apple doing on-device scanning for illegal material, similar to sending a police officer to search your house, a line that no other cloud storage provider has crossed before.

For most it is also not about what the system is doing now, but what it is capable of tomorrow, under the right pressure.

To those people Federighi did have an answer, saying:

“We ship the same software in China with the same database we ship in America, as we ship in Europe. If someone were to come to Apple [with a request to scan for data beyond CSAM], Apple would say no. But let’s say you aren’t confident. You don’t want to just rely on Apple saying no. You want to be sure that Apple couldn’t get away with it if we said yes,” he told the Journal. “There are multiple levels of auditability, and so we’re making sure that you don’t have to trust any one entity, or even any one country, as far as what images are part of this process.”

Unfortunately, Federighi did not expand on their audit process, merely saying that there will be an independent auditor who can verify the images involved.

Federighi however considered hackers and security researchers as part of the audit trail, saying:

“Imagine someone was scanning images in the cloud. Well, who knows what’s being scanned for?” Federighi said, referring to remote scans. “In our case, the database is shipped on device. People can see, and it’s a single image across all countries.”

Apple’s reassurances are however light on details, and the company of course actively resists security researchers examing their operating system, for example storing the database of CSAM hashes in the secure enclave of the iPhone.

The biggest issue is however a matter of trust – many concerned users simply do not trust Apple to put their interests ahead of their greed, as had been demonstrated numerous times by Apple’s anti-consumer behaviour, and it is a bit late for Apple to rely on trust which they have lost a long time ago.

via the verge