Microsoft DeBERTa surpasses puny humans in SuperGlue reading comprehension test

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

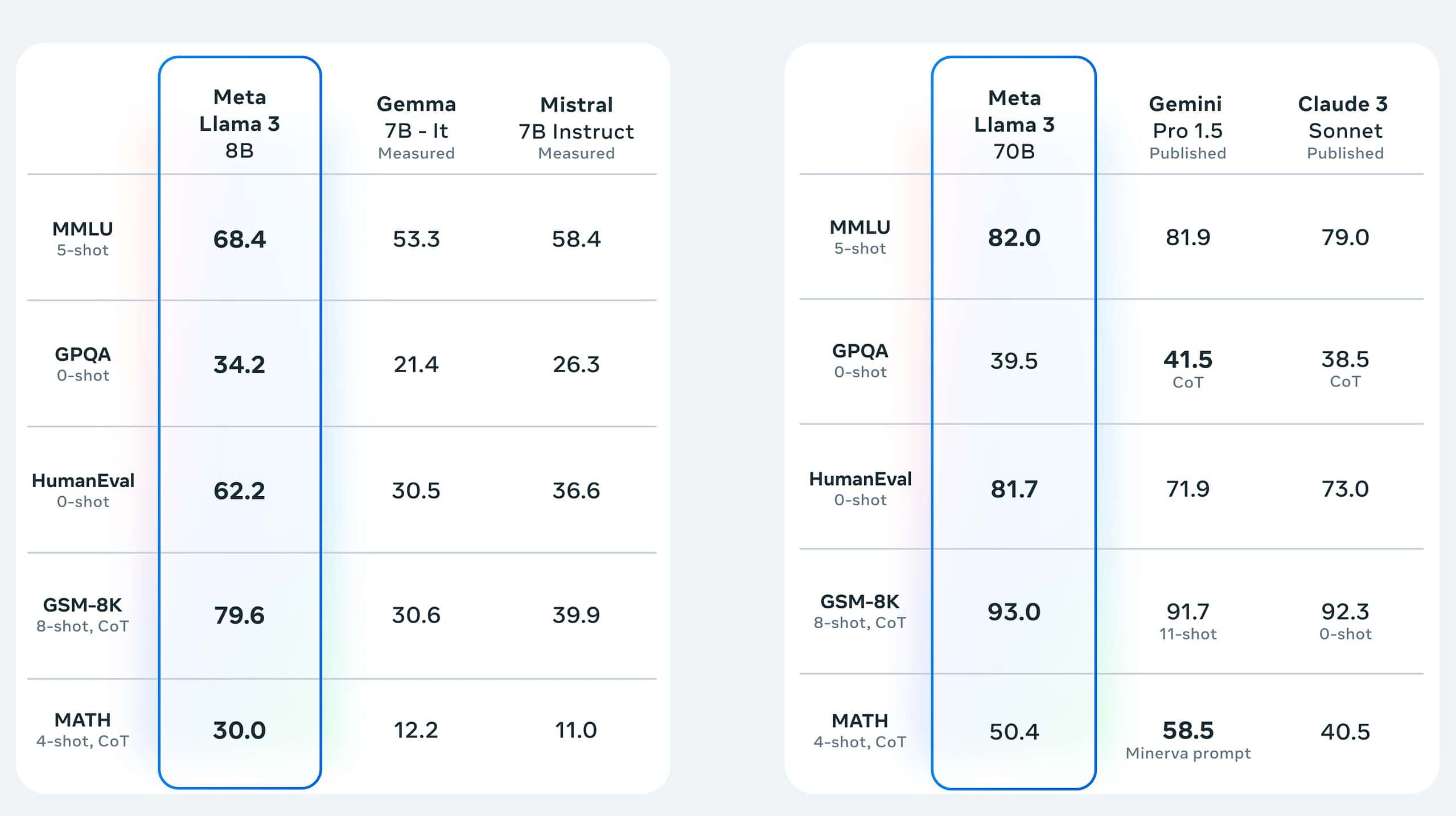

There has been massive progress recently in training networks with millions of parameters. Microsoft recently updated the DeBERTa (Decoding-enhanced BERT with disentangled attention) model by training a larger version that consists of 48 Transformer layers with 1.5 billion parameters. The significant performance boost makes the single DeBERTa model surpass the human performance on the SuperGLUE language processing and understanding for the first time in terms of macro-average score (89.9 versus 89.8), outperforming the human baseline by a decent margin (90.3 versus 89.8). The SuperGLUE benchmark consists of a wide range of Natural Language Understanding tasks, including question answering, natural language inference. The model also sits at the top of the GLUE benchmark rankings with a macro-average score of 90.8.

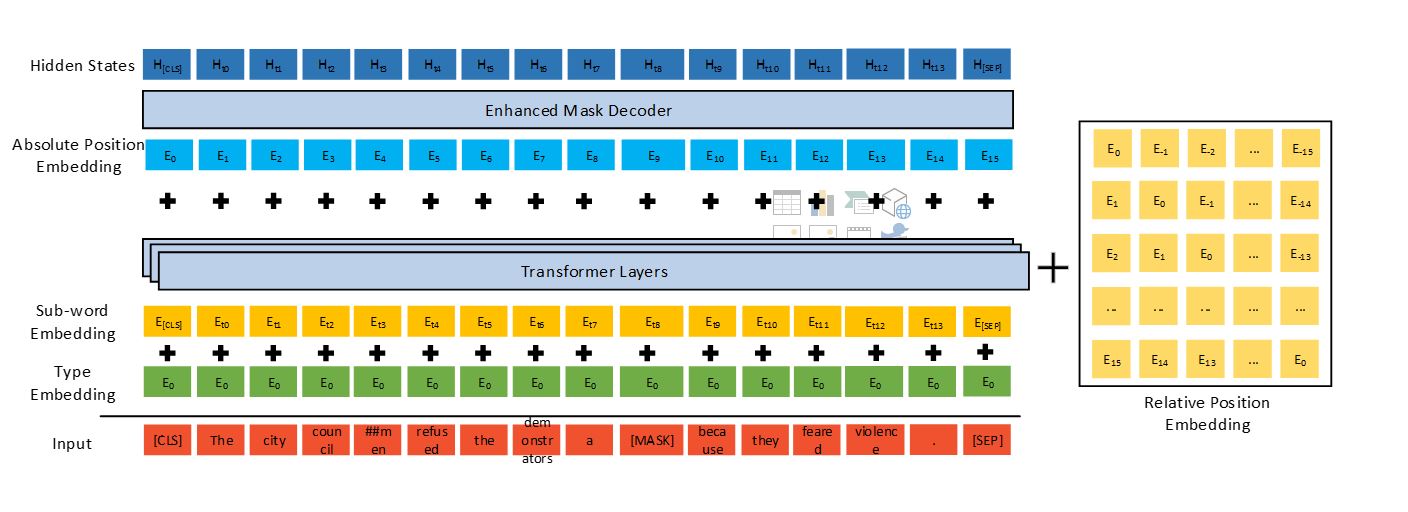

DeBERTa improves previous state-of-the-art PLMs (for example, BERT, RoBERTa, UniLM) using three novel techniques: a disentangled attention mechanism, an enhanced mask decoder, and a virtual adversarial training method for fine-tuning.

Compared to Google’s T5 model, which consists of 11 billion parameters, the 1.5-billion-parameter DeBERTa is much more energy efficient to train and maintain, and it is easier to compress and deploy to apps of various settings.

DeBERTa surpassing human performance on SuperGLUE marks an important milestone toward general AI. Despite its promising results on SuperGLUE, the model is by no means reaching the human-level intelligence of NLU. Humans are extremely good at leveraging the knowledge learned from different tasks to solve a new task with no or little task-specific demonstration.

Microsoft will integrate the technology into the next version of the Microsoft Turing natural language representation model, used in places such as Bing, Office, Dynamics, and Azure Cognitive Services, powering a wide range of scenarios involving human-machine and human-human interactions via natural language (such as chatbot, recommendation, question answering, search, personal assist, customer support automation, content generation, and others). In addition, Microsoft will release the 1.5-billion-parameter DeBERTa model and the source code to the public.

Read all the detail at Microsoft here.