Microsoft AI researchers accidentally leaked 38TB of internal data

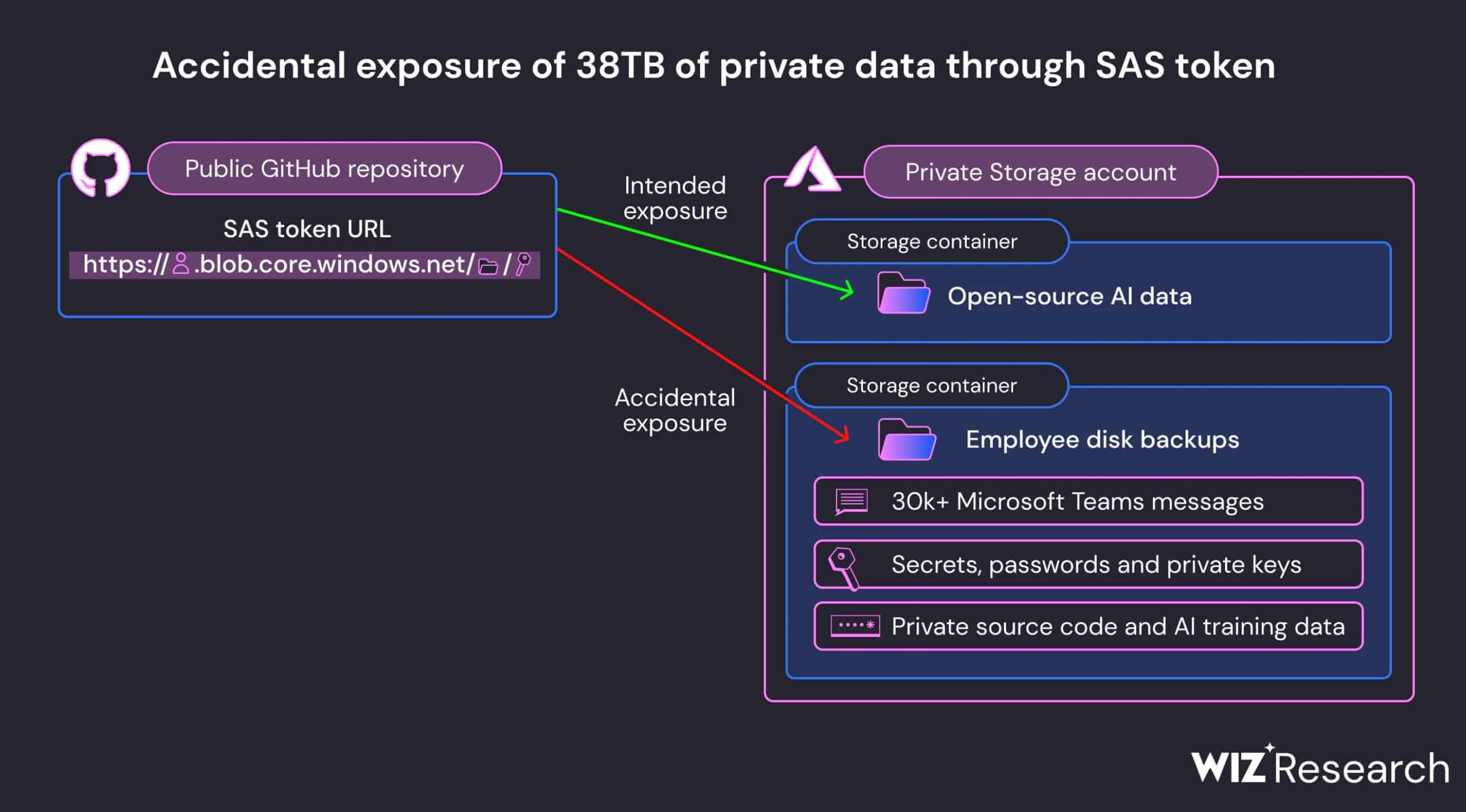

Today, Wiz Research revealed that Microsoft’s AI GitHub repository leaked 38TB of internal company data. Microsoft’s AI research team published open-source training data on GitHub. Along with the training data, they accidentally exposed 38 terabytes of additional private data which includes disk backup of a couple of Microsoft’s employees. The disk backup contained secrets, private keys, passwords, and over 30,000 internal Microsoft Teams messages

Microsoft Azure Storage allows customers to share their data with others using (Shared Access Signature) SAS tokens. However, it doesn’t provide any way to manage the SAS tokens easily and they are difficult to track. Microsoft Researcher’s accidentally published the SAS token for their Azure Storage account which led to this huge leak. It is highly recommended to avoid using SAS token for external sharing as they can easily go unnoticed and expose sensitive data.

Wiz Research reported this issue to MSRC on Jun. 22, 2023 and the SAS token was invalidated by Microsoft two days later. In August, Microsoft completed the internal investigation of potential impact. According to Microsoft’s investigation, no customer data was exposed, and no other internal services were put at risk because of this issue. Also, no customer action is required in response to this issue.

GitHub’s secret scanning service can monitor all public open-source code changes for plaintext exposure of credentials and other secrets. However, this service flags Azure Storage SAS URLs pointing to sensitive content, such as VHDs and private cryptographic keys. After this issue, Microsoft has expanded this detection to include any SAS token that may have overly-permissive expirations or privileges. Microsoft is also doing complete historical rescans of all public repositories in Microsoft-owned or affiliated organizations and accounts to avoid similar issues.

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

User forum

0 messages