What is SynthID: YouTube's inaudible watermarks for its AI generated music

SynthID is a technology that generates undetectable digital watermarks for AI-generated music and images. With this tool, users can embed a digital watermark directly into AI-generated images or audio, which is impossible for humans to notice but can be used for identification purposes.

The technology was developed by Google DeepMind and further refined in partnership with Google Research. SynthID has the potential to be used across other AI models and will be integrated into more products soon. This will enable people and organizations to work with AI-generated content responsibly.

How does this watermarking work?

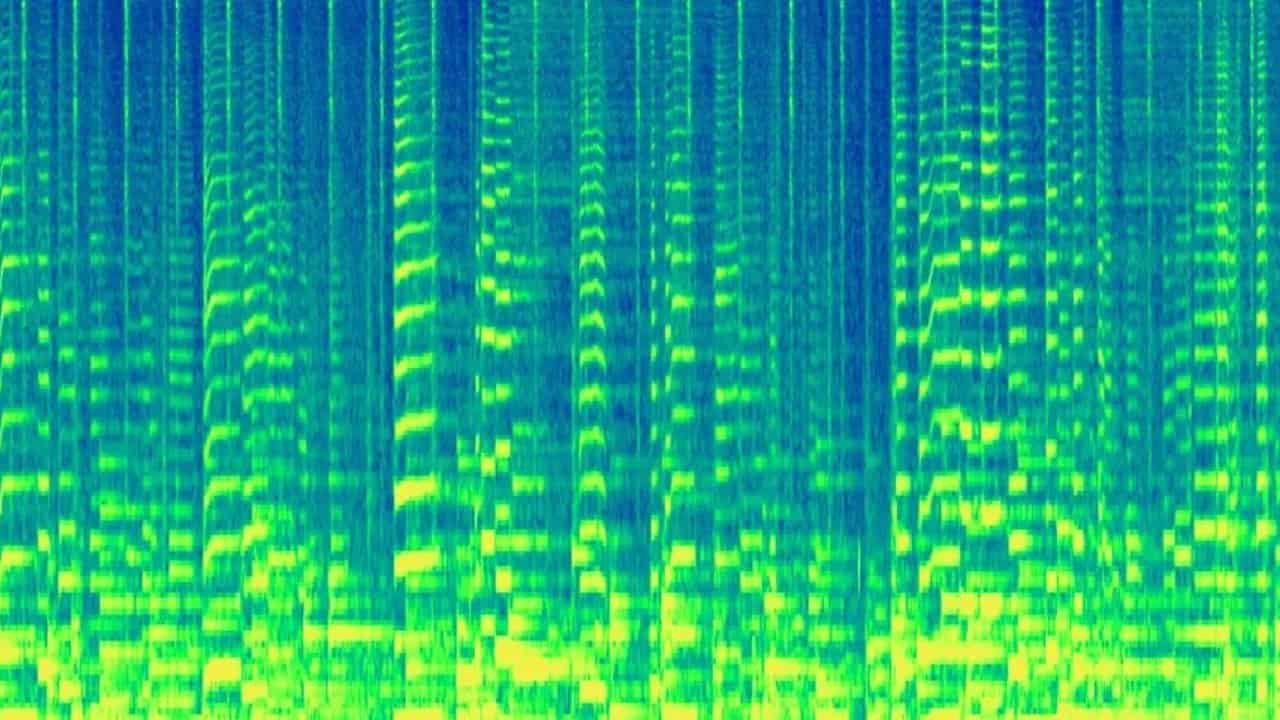

To embed a digital watermark, the audio wave is converted into a spectrogram, the digital watermark is added, and then it is converted back to audio wave format. This process guarantees that the watermark is both inaudible to humans and resistant to modifications.

For AI-generated images, it embeds a digital watermark into the pixels of the image, which is not visible to the naked eye. The watermark is designed to withstand alterations such as adding filters, changing colors, and using lossy compression schemes like JPEG compression. Even if the image is altered, the watermark remains detectable without affecting the quality of the image.

After embedding the watermark, SynthID can scan the audio track or image to detect the presence of the digital watermark. The results are provided with confidence levels that assist users in determining whether the content was generated using specific AI models, like Lyria for music or Imagen for images.

It’s fair to say that SynthID doesn’t serve as a comprehensive solution for mitigating misinformation but represents an early technical approach to enhance trust in AI-generated content by enabling its traceability and identification. With this, YouTube is now labeling videos with warnings if they contain AI-generated content.

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

User forum

0 messages