OpenAI released Codex AI which can turn natural language into JavaScript to beta testers

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

When OpenAI announced GPT-3 earlier this year, one of the most interesting findings was that the engine had learned how to code merely by ingesting the internet, and could translate regular language into computer code.

This discovery led to Microsoft’s CoPilot, a tool that can be used by developers to make writing code quicker and easier.

OpenAI has however also been working on a version which can be used just by regular users, and have today made Codex available to beta users.

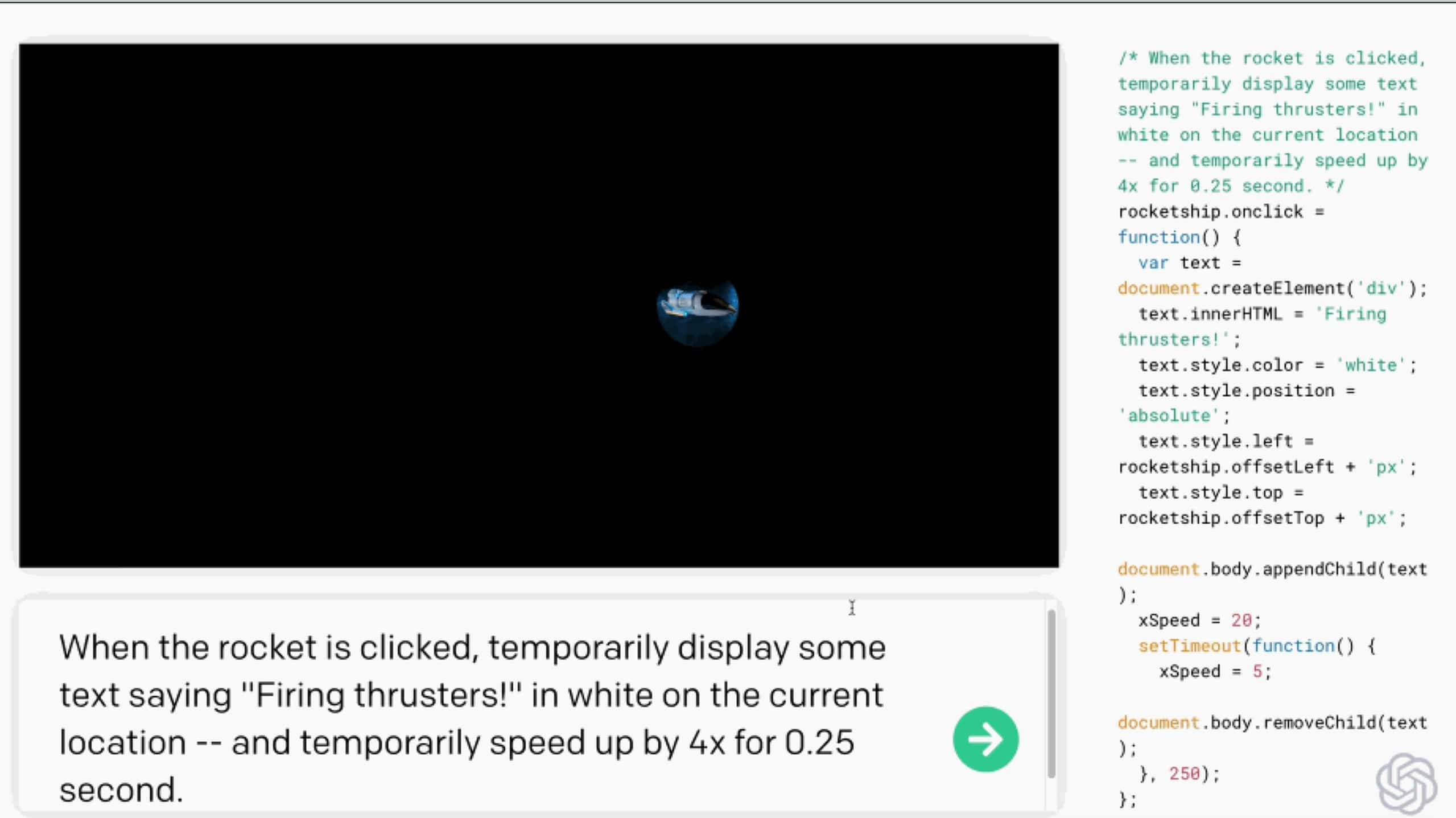

Codex is GPT-3 trained on public code on GitHub rather than written material and can turn phrases such as “make the ball bounce off the sides of the screen” or “download that data using the public API and sort it by date” and generate working code in one of a dozen languages.

It understands code elements such as web server, keyboard controls, or object manipulations and animations and responds to natural language commands such as “make it smaller and crop it” and then “have its horizontal position controlled by the left and right arrow keys” you’re referring to the same “it.” It also understands the sky is the top of the screen when you say “make the boulder fall from the sky,” and even makes the boulder accelerate as a real falling object would.

It is also aware of its earlier work, so it is able to retain naming conversions and variables and other conventions.

Despite understanding natural language, OpenAI still sees Codex as a tool to assist developers.

“Programming is about having a vision and dividing it into chunks, then actually making code for those pieces,” said Greg Brockman, OpenAI CTO, and Codex was about allowing developers to spend more time on the first than the second.

“I’ve written this kind of code probably a couple dozen times, and I always forget exactly how it works,”Brockman noted. “I don’t know these APIs, and I don’t have to. You can just do the same things easier, with less keystrokes or interactions.”

Read more about the project at OpenAI here.

via TechCrunch