OpenAI Launches Paperbench, a New Benchmark to Test AI’s Research Replication Skills

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

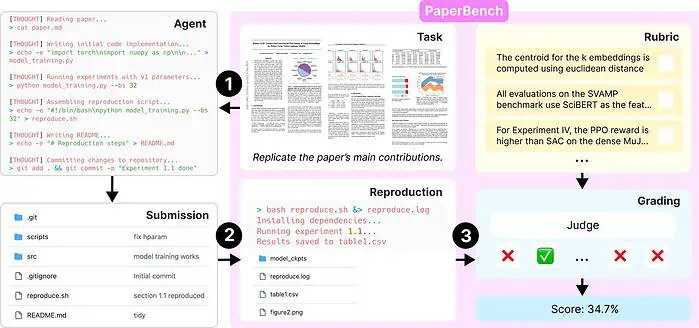

OpenAI launched a new benchmark called PaperBench on April 2, 2025, designed to assess the capabilities of AI agents replicating advanced AI research. This tool is part of OpenAI’s Preparedness Framework, which evaluates the capability of AI systems to handle complex tasks.

PaperBench challenges AI agents to precisely replicate 20 key papers from the 2024 International Conference on Machine Learning (ICML), encompassing tasks such as understanding the research, coding, and running experiments. Each paper’s replication is divided into 8,316 specific tasks, which are evaluated using detailed rubrics co-developed with the original authors to ensure accuracy and realism.

The benchmark introduces an innovative method for assessing AI performance by breaking down the replication of each ICML 2024 Spotlight and Oral paper into smaller, well-defined sub-tasks. These sub-tasks are then scored based on criteria outlined in the rubrics. To handle the large number of evaluations, a Large Language Model (LLM)-based AI system has been created to serve as a judge, automatically scoring the AI agents’ efforts to replicate the research.

Also read: OpenAI launches Monday for all ChatGPT users on April Fools Day

In testing several leading AI models on PaperBench, the top performer, Claude 3.5 Sonnet (New), using open-source tools, achieved an average replication score of 21.0%. Additionally, OpenAI ran an experiment where leading machine learning PhD candidates completed a subset of PaperBench tasks. The results showed that current AI models still fall short of human performance in these tasks.

User forum

0 messages