Microsoft Azure AI unveils 'Prompt Shields' to combat LLM manipulation

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

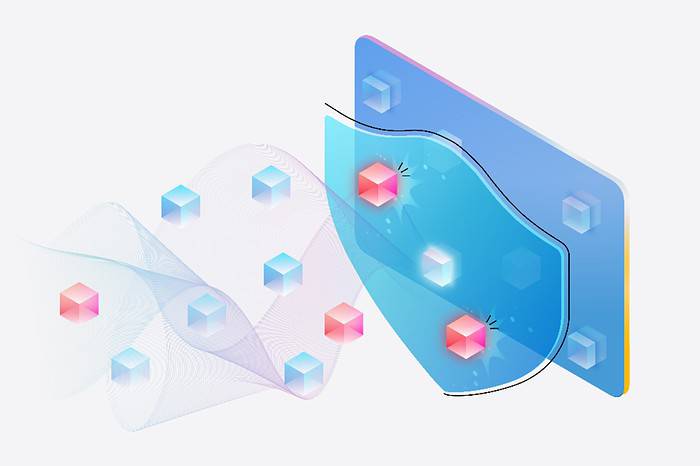

Microsoft today announced a major security enhancement for its Azure OpenAI Service and Azure AI Content Safety platforms. Dubbed “Prompt Shields,” the new feature offers robust defense against increasingly sophisticated attacks targeting large language models (LLMs).

Prompt Shields protects against:

- Direct Attacks: Also known as jailbreak attacks, these attempts explicitly instruct the LLM to disregard safety protocols or perform malicious actions.

- Indirect Attacks: These attacks subtly embed harmful instructions within seemingly normal text, aiming to trick the LLM into undesirable behavior.

Prompt Shields is integrated with Azure OpenAI Service content filters and are available in Azure AI Content Safety. Thanks to advanced machine learning algorithms and natural language processing, Prompt Shields can identify and neutralize potential threats in user prompts and third-party data.

Spotlighting: A Novel Defense Technique

Microsoft also introduced “Spotlighting,” a specialized prompt engineering approach designed to thwart indirect attacks. Spotlighting techniques, such as delimiting and datamarking, help LLMs clearly distinguish between legitimate instructions and potentially harmful embedded commands.

Availability

Prompt Shields is currently in public preview as part of Azure AI Content Safety and will be available within the Azure OpenAI Service on April 1st. Integration into Azure AI Studio is planned in the near future.