Google's Gemini AI to Power Robots; Here's What You Should Know

2 min. read

Published on

Read our disclosure page to find out how can you help MSPoweruser sustain the editorial team Read more

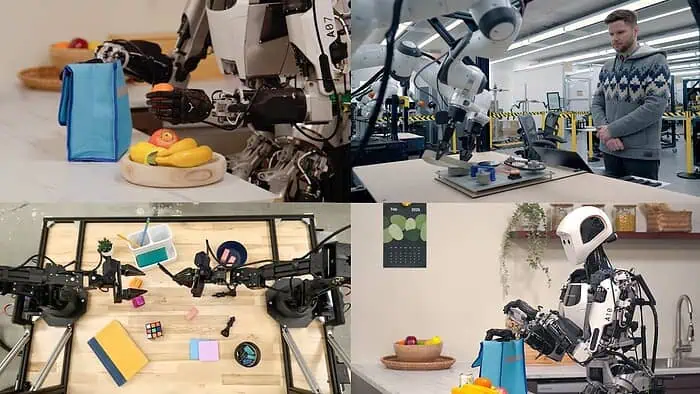

In a recent announcement for its DeepMind laboratory, Google has unveiled two new advanced AI models, Gemini Robotics and Gemini Robotics-ER. Both these models use Gemini to enhance robotic capabilities in real-world environments. Built upon the Gemini 2.0 architecture, these AIs can integrate vision, language, and action into robots, thereby allowing them to perform more complex tasks.

Also read: OpenAI launches Monday for all ChatGPT users on April Fools Day

Google’s Gemini Robotics allows robots to understand tasks and execute them using visual and linguistic inputs. Some examples of tasks could be folding origami, organizing workspaces, or even preparing meals. Its adaptability extends to various robotic forms, including bi-armed platforms like ALOHA 2 and humanoid robots such as Apptronik’s Apollo. ?

Complementing this, Gemini Robotics-ER enhances spatial reasoning, enabling robots to comprehend their surroundings more effectively. This advancement allows for improved object detection, trajectory planning, and interaction with dynamic environments. ?

Carolina Prada, the head of Google Robotics, shared, “What comes naturally to humans is difficult for robots.” She further explained that “Dexterity requires both spatial reasoning and complex physical manipulation. Across testing, Gemini Robotics has set a new state-of-the-art for dexterity, solving complex multi-step tasks with smooth motions and great completion times.”

Google’s initiative to integrate AI models into robotic systems is an important step in transforming industries by automating intricate tasks. This will further help save time, increase efficiency for completing the workload, and explore further possibilities in technological advancement.

User forum

0 messages